In this tutorial, we will show you how to configure and remotely control Trossen Robotics' PhantomX PincherX 100 Robot connected to Leo Rover.

In this tutorial, we will show you how to configure and remotely control Trossen Robotics' PhantomX PincherX 100 Robot connected to Leo Rover.

PincherX100 Mobile, with its 4DOF and 300mm reach, is on the smaller side of robotic arms, however, it's big enough to be used as a nice extension for a Leo Rover. The following tutorial outlines the basics of how to connect it to the rover and shows how some ROS packages might be used with it. Robotic arms are used anywhere from education and factories up to dangerous materials handling facilities, that's why a range of possibilities emerging from attaching a robotic arm to the rover is almost endless. We hope that this tutorial will be a good starting point to use robotic arms in your future projects.

This tutorial will teach you how to connect PincherX 100 Mobile to a Leo Rover, visualize it using RViz and the basics of control using MoveIt, joystick, and command line.

.jpg)

PincherX 100 Mobile robotic arm

M5x10 Allen head screw x 4

M3x6 Allen head screw x 4

Barrel Jack cable (connected to ~12V power supply - e.g. rovers battery)

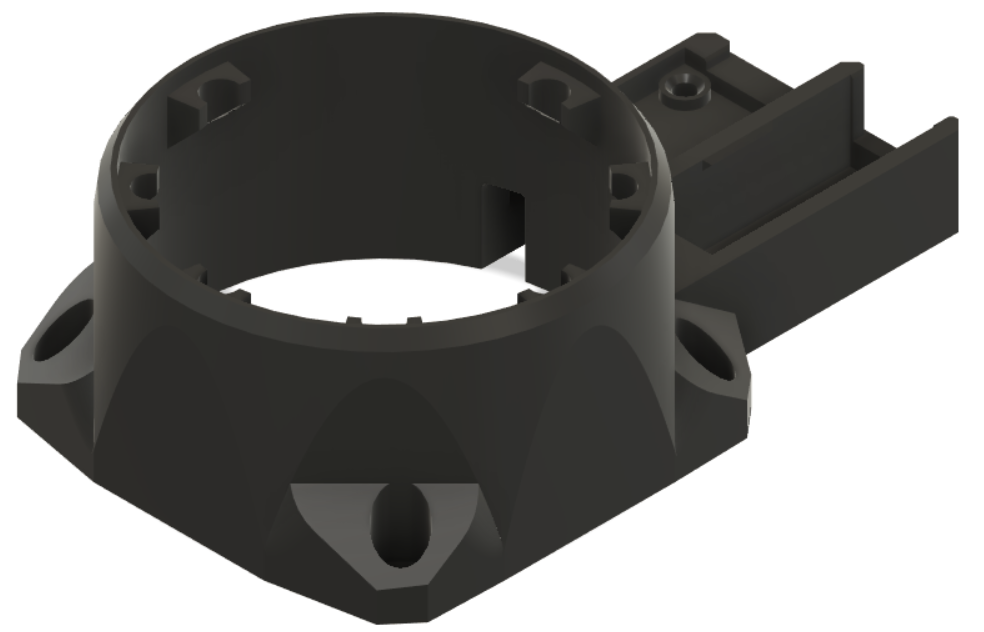

We've redesigned the base of the arm in order to make it easier to connect it to the rover.

If you want to print it on your own, you can find the SLT file here. After printing, it you'll need to press 4x M3 heat set inserts into the holes shown below.

To replace the stock base with the one you printed, you need to remove all the screws at the bottom of the arm, gently lift old cover and switch it for a new one. Remember to stick the Dynamixel cable through the hole in the base. Then, screw the screws back in place.

Use 4x M5x10 allen head screws to bolt the arm to the mounting plate of the Leo Rover.

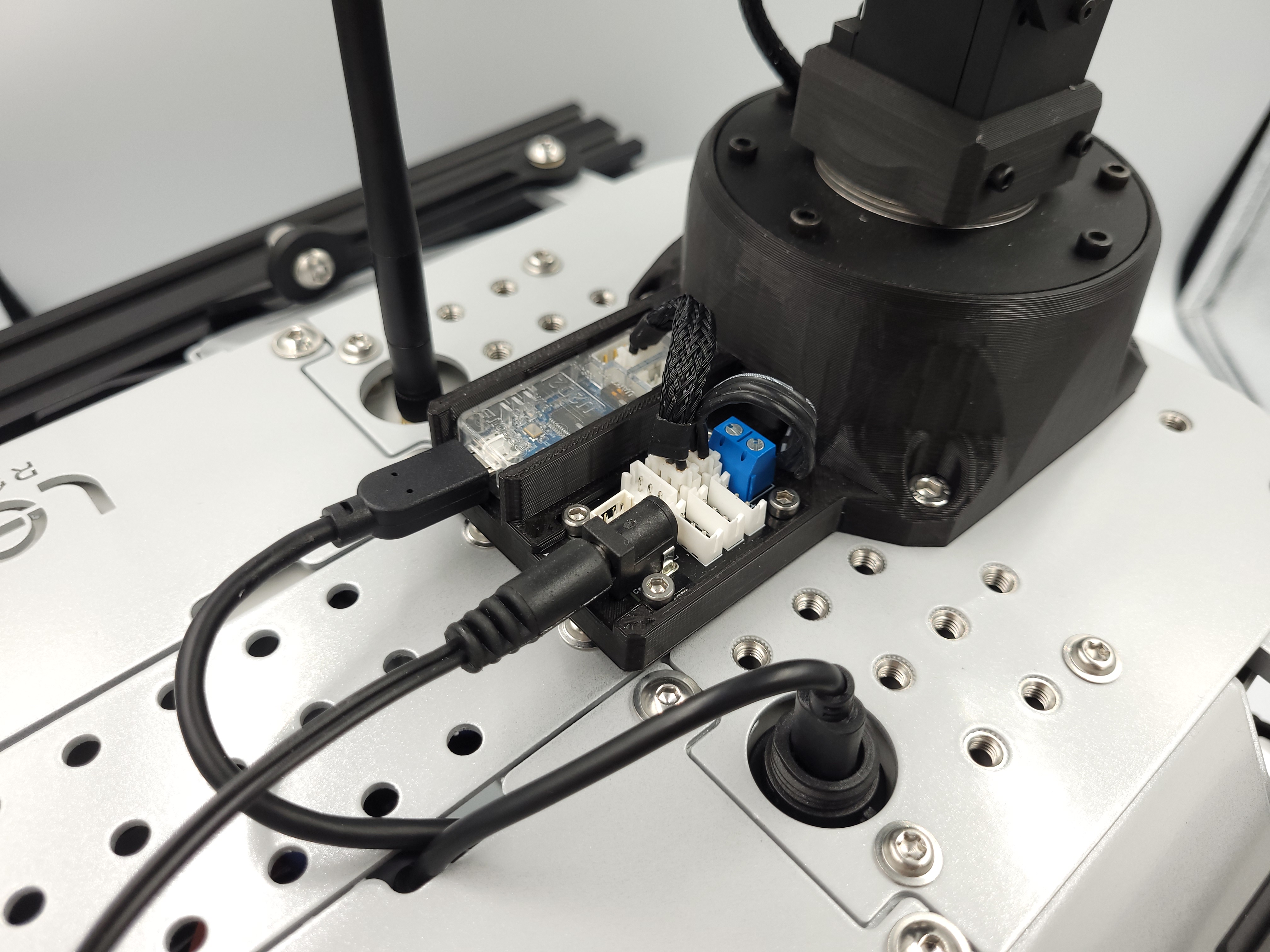

Press U2D2 into the deeper opening; it's going to snap in place and will be held securely inside.

Using 4x M3x6 allen head screws, bolt the power/communication distribution board to the base.

If you've bought the arm from our online shop, it will come with the base already replaced.

Stick the Dynamixel cable coming out of the base of the arm into the power distribution board.

Connect U2D2 and the power distribution board with the short Dynamixel cable.

Connect the U2D2 to the rover using an USB cable

The last step is to stick connect the barrel jack cable to the battery power supply ( a powerbox might be useful here) and plug into the other end into power distribution board.

With everything connected it should look like this:

As the installation process of the arm's ROS packages might be difficult, we have prepared bash script that will guide you through the installation. To execute it, you need to connect to the rover via ssh (see prerequisites), and type in the terminal on the rover:

You will be asked to provide the absolute path to your ROS workspace (if you don't have one on the rover, check this tutorial first). Type it in and confirm with enter.

Once the packages have been built, you can edit the environmental setup file to point to your result space. Open the file in nano:

Comment out the first line by adding the # sign and add the source command for your workspace. The first 2 lines should look essentially like this:

Different operations on the arm require different modes for the ros_controller. Instead of changing the package configs, we will make our own config file, and load it in the launch file. First, make your config file and open it to edit it:

Then, paste these lines in the file (ctrl+shift+v):

To save and exit the file, you can use respectively ctrl+o and ctrl+x.

Now, to add the arm's driver to the rover's launch file, open the robot.launch file:

and paste these lines somewhere between the <launch> tags:

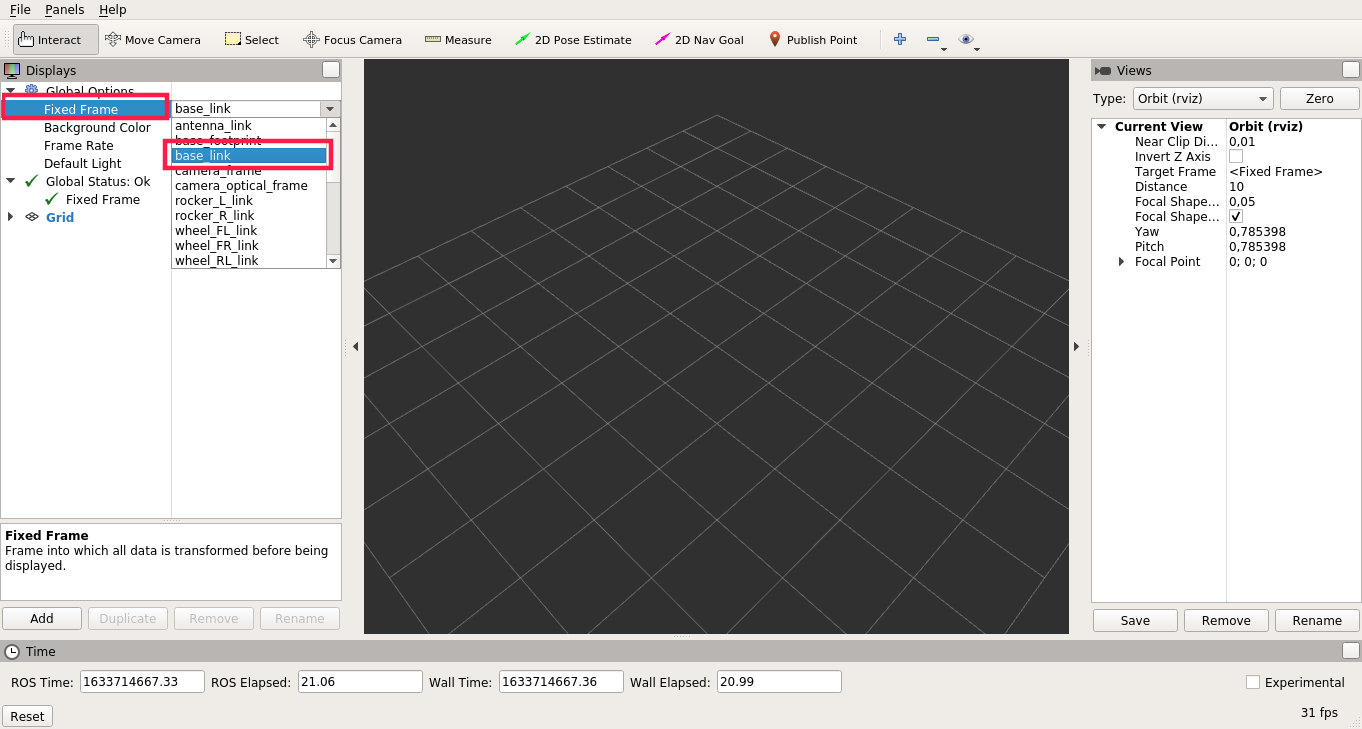

You can also edit the robot's URDF file to connect the arm's base link to the rover's model. To do this, open the robot.urdf.xacro file:

and paste these lines somewhere between the <robot> tags:

That's it! On the next boot, the arm driver node will start together with all the other nodes. You can manually restart the running nodes by typing:

Now that you have the driver running, you should see the new ROS topics and services under the /mobile_px100 namespace. For a full description of the ROS API, visit the ROS controllers configuration README page. You can test some of the features with the rostopic and rosservice command-line tools:

Retrieve the information about the arm (all joints):

Publish the position command to the elbow joint:

Turn off the torque on all joints:

There are other ways to controll the arm, but it's better to use them on your computer and not the rover. To do so, you need to install the ROS packages on your computer (you can use the same command that you have used for installing the packages on the rover), but you need to have a computer with ROS installed (see prerequisites), ROS workspace and Linux OS.

You also need your computer to be properly configured to communicate with the nodes running on the rover. For this, you can visit the Connecting other computer to ROS network section of the ROS Development tutorial:

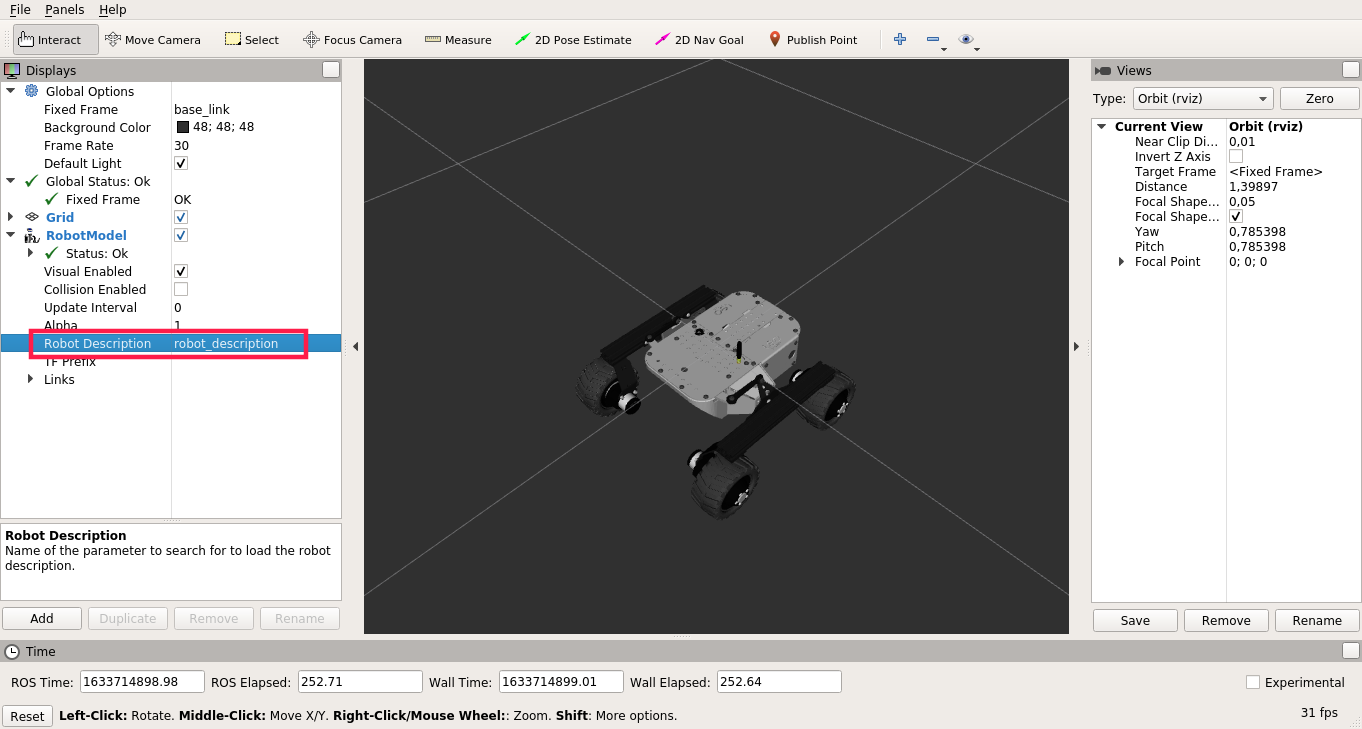

It's also useful to have the leo_description package installed on your computer for visualization purposes. To install it, type:

MoveIt motion planning framework will allow us to plan and execute a collision-free trajectory to the destination pose of the end-effector. To use it, first, make sure that the arm is powered and connected to the rover and that the ros_controll node is running on the rover. You can check it with:

and check logs for the nodes in the /mobile_px100 namespace. You can also check the list of running nodes using:

And check if there are nodes in the same namespace listed.

Once you are sure the nodes are running, you can go to your computer and run the moveit. But first, you need to connect your computer to the rover's ROS network (tutorial linked in previous example). You can check if your computer is connected with:

If the computer is correctly connected, you will see the list of the topics that are currently available in the ROS network (all of them are on the rover if you don't have any node launched on your computer).

To work correctly with the arm, moveit requires us to change the loaded arm mode. We need to load back the default mode. To do so, first, open to the robot.launch file (on the rover)

And comment the line where we specify the path for our mode (replace the line in the file with the line below):

Now, you just need to restart the running nodes with:

To run moveit with the PincherX 100 Mobile arm, run on your computer:

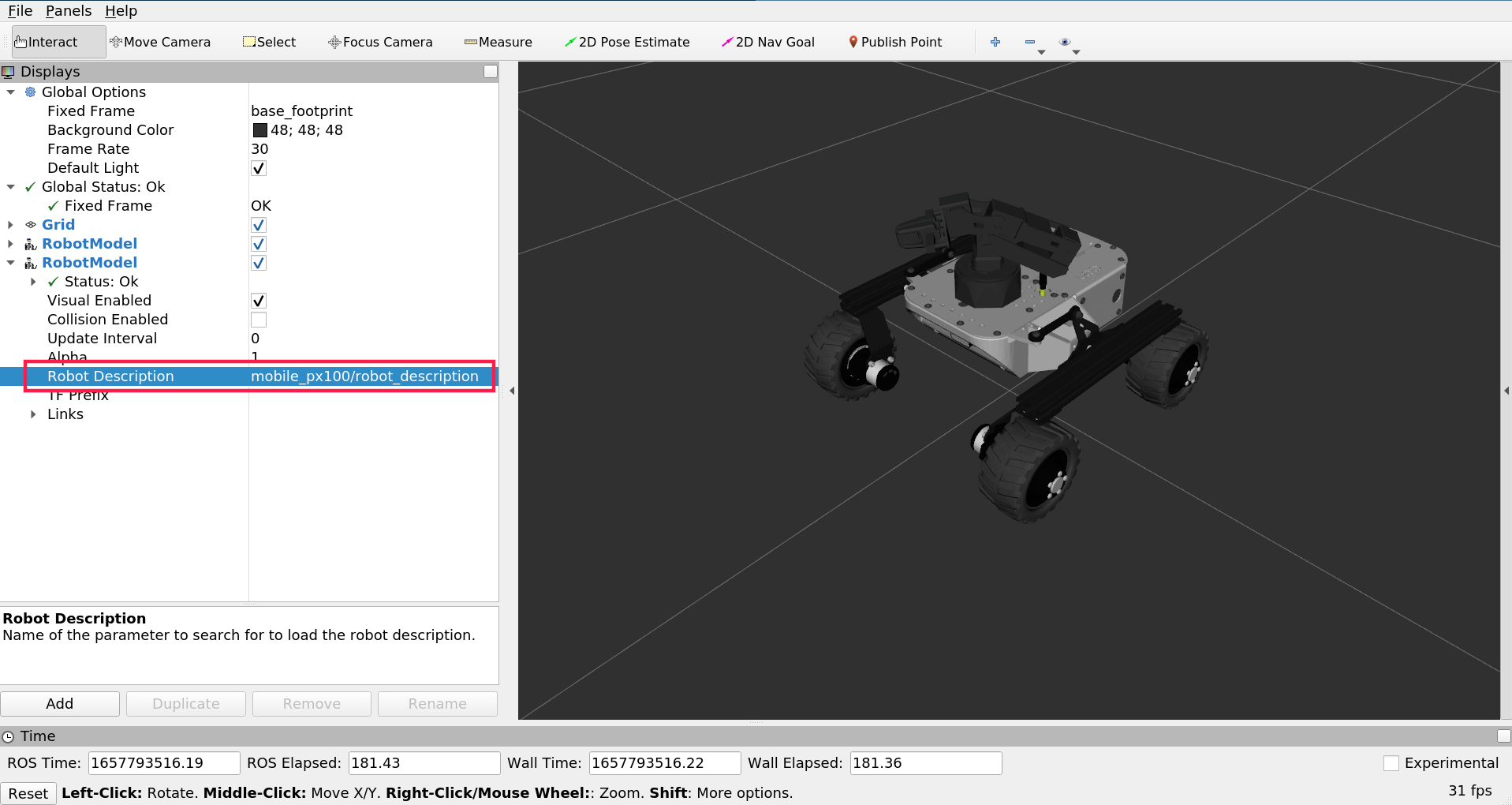

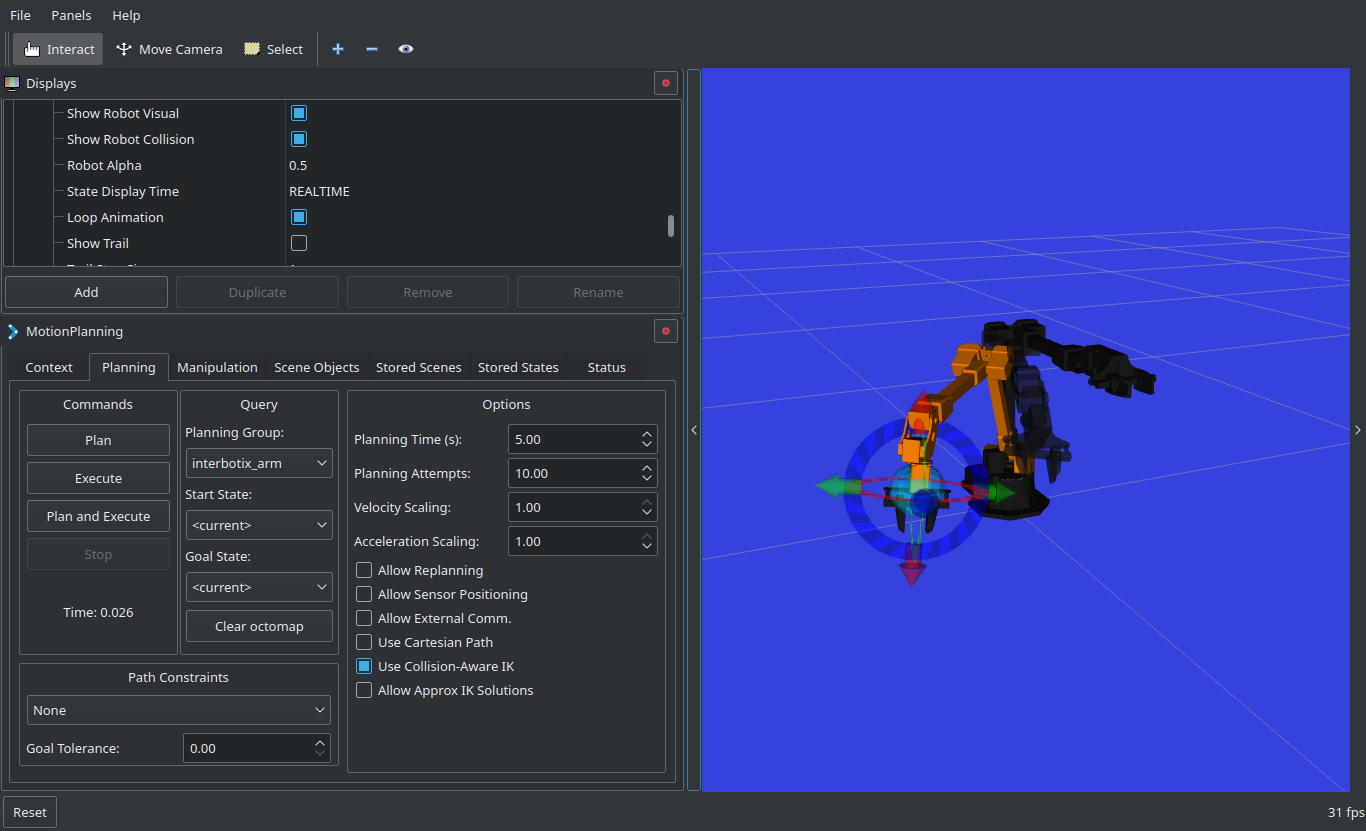

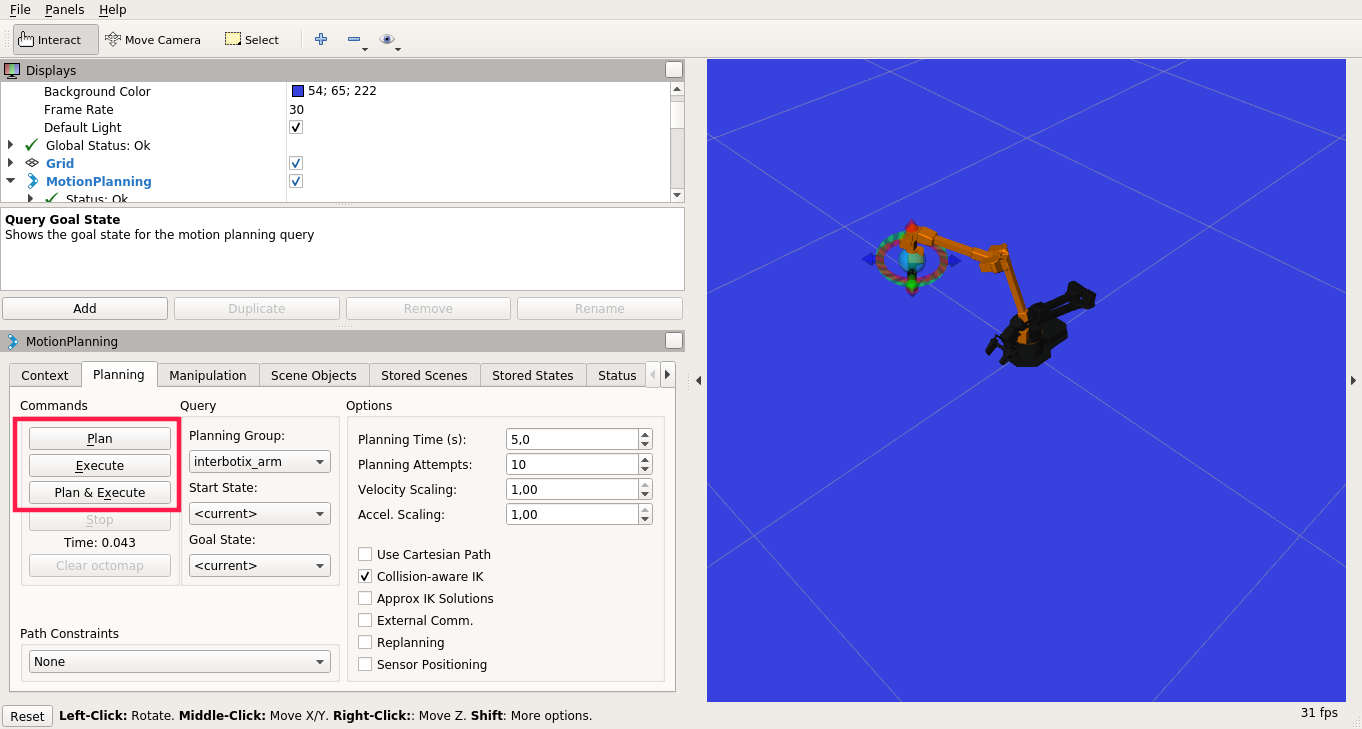

The MoveIt GUI should appear:

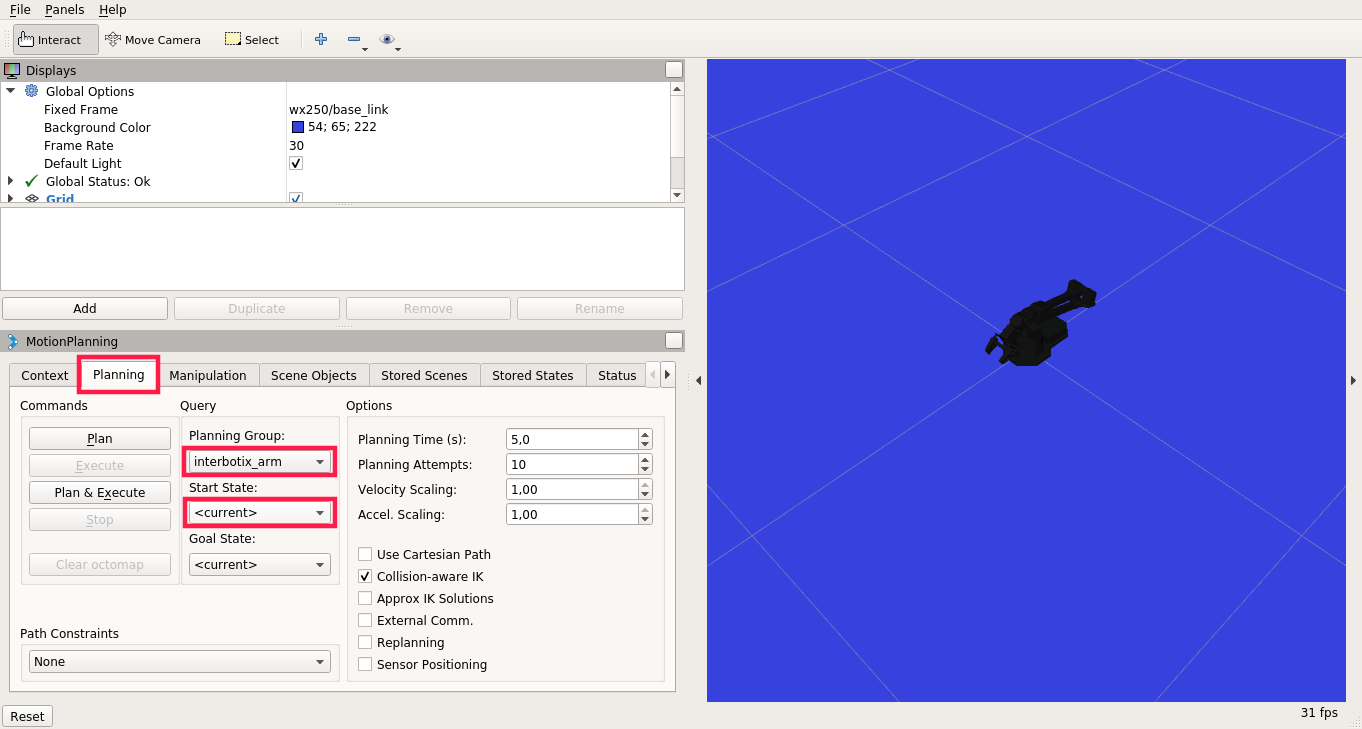

On the MotionPlanning panel, click on the Planning tab, choose interbotix_arm for the Planning Group and <current> for the Start State (to operate with the gripper, change the Planning Group to interbotix_gripper)

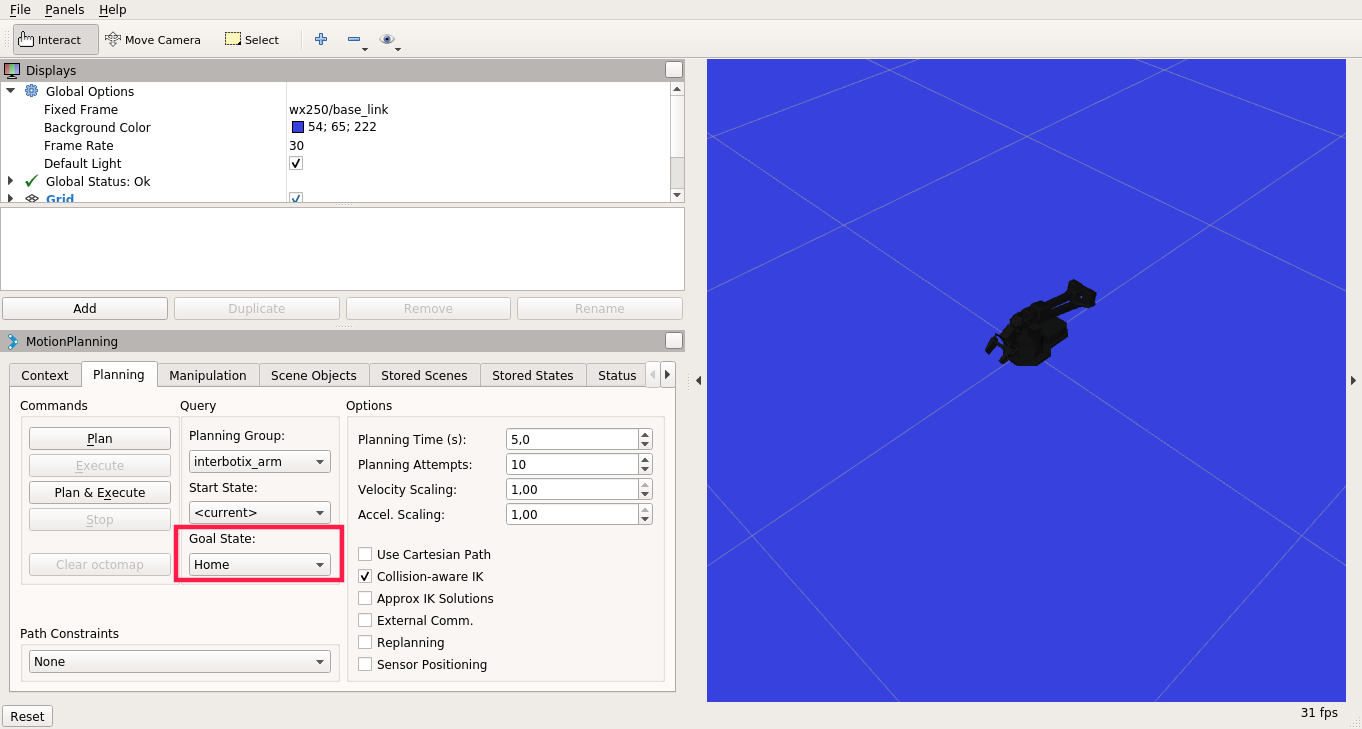

There are some predefined poses which you can choose for the Goal State, such as home, sleep or upright (for the gripper, the poses are open, closed, home)

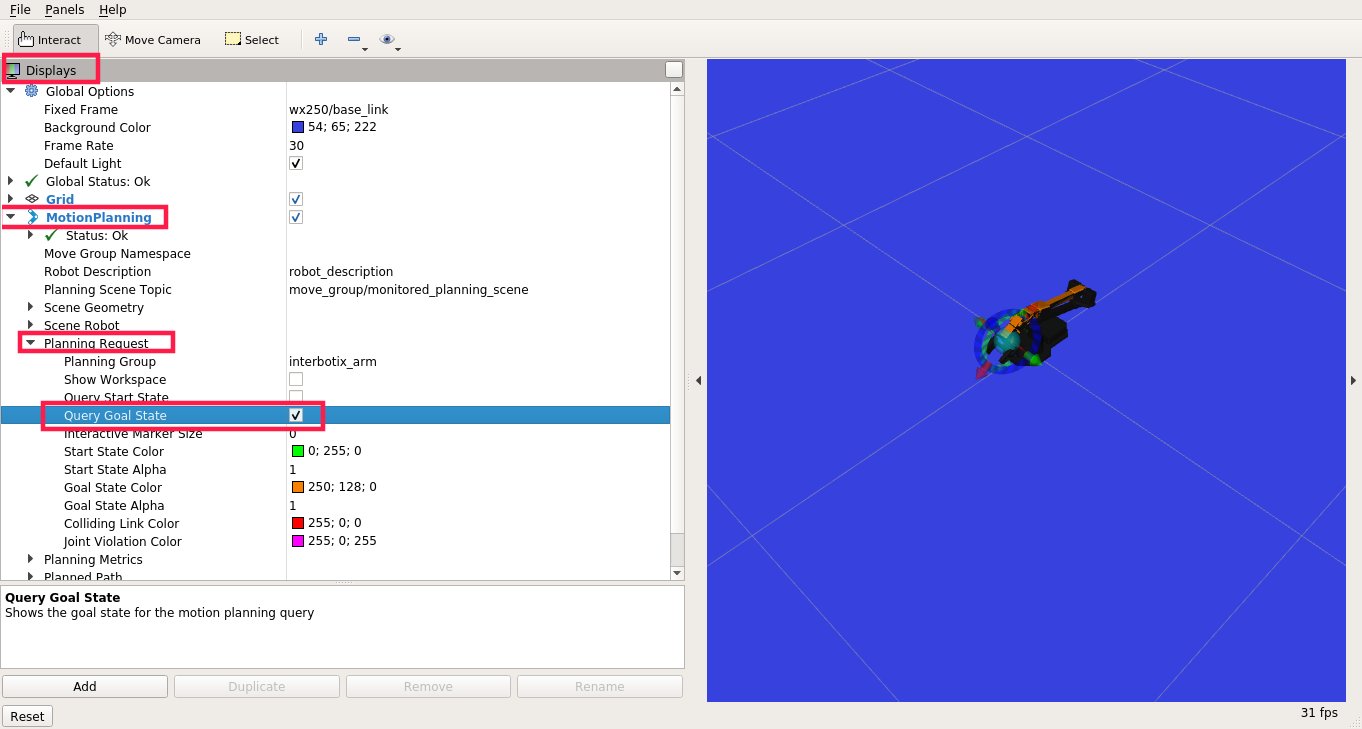

To set the pose manually, navigate to the Displays panel ->MotionPlanning ->Planning Request and check Query Goal State. You should now be able to manually set the end-effector pose for the goal state (only for the whole arm, for the gripper it won't work).

When the goal state is set, click on the Plan button to plan the trajectory (the simulated trajectory visualization should appear) and Execute to send the trajectory to the driver

If you want to use the MoveIt capabilities in a Python script or a C++ program, please look at the interbotix_moveit_interface example.

The interbotix_xsarm_joy package provides the capability to control the movement of the arm (utilizing inverse kinematics) with a PS3, PS4 or an Xbox 360 joystick.

To use the package with the arm connected to your rover:

Aside from the previous examples, there is also a Python API for manipulating the arm. It is designed to mainly work with the position mode for the arm, pwm mode for the gripper and the Time-Based-Profile (these are the default configs in the interbotix_xsarm_control package).

There are some example scripts that demonstrate the use of the API in the interbotix_ros_manipulators/interbotix_ros_xsarms/examples/python_demos directory.

The bartender.py demo performs some pick, pour and place operations. To run it, first, open the file in a text editor and search for this line:

Change wx250s to mobile_px100 and then, type on the terminal:

If everything went right, you should see the arm in action.

You can check the other files in the directory for more examples. To get a better understanding on how the API can be used and the API documentation, take a look at interbotix_xs_modules.

If you found this tutorial interesting, make sure to check out other tutorials we provide on our Knowledge Base site.